Here is a reference to a highly interesting articleabout the systemic problem of proving the advertising impact of online advertising and targeting. Its title: "The new dot com bubble is here: it's called online advertising".

Effects - which ones exactly? KPI from Google and Facebook do not tell the whole story

You know how it is: you use targeting and all the efficiency KPIs shoot up. More sales for the same media money. Or even more registrations or page visits. But where do these increases come from? What is their cause? What do the KPIs from the black boxes of Google and Facebook actually tell us? Researchers at the Kellogg School of Management asked themselves this question and evaluated the data sets of U.S. advertisers using scientific and statistical methods. The result is a study entitled: A Comparison of Approaches to Advertising Measurement: Evidence from Big Field Experiments at Facebook.

Tracking advertising impact with Big Data analyses

It will be difficult to argue against the study results, because the database is large, very large - in other words, valid. Experiments from 15 US advertisers were evaluated, with a total of 500 million users and 1.6 billion ad impressions. These data made a statement about the effects of online advertising on advertising goals such as direct sales and website visits. For this purpose, A/B tests were conducted in various industries, sometimes with, sometimes without advertising. The core result: there are 2 types of "advertising impact", in both cases, it leads to users doing exactly what the advertising intends, e.g. to buy.

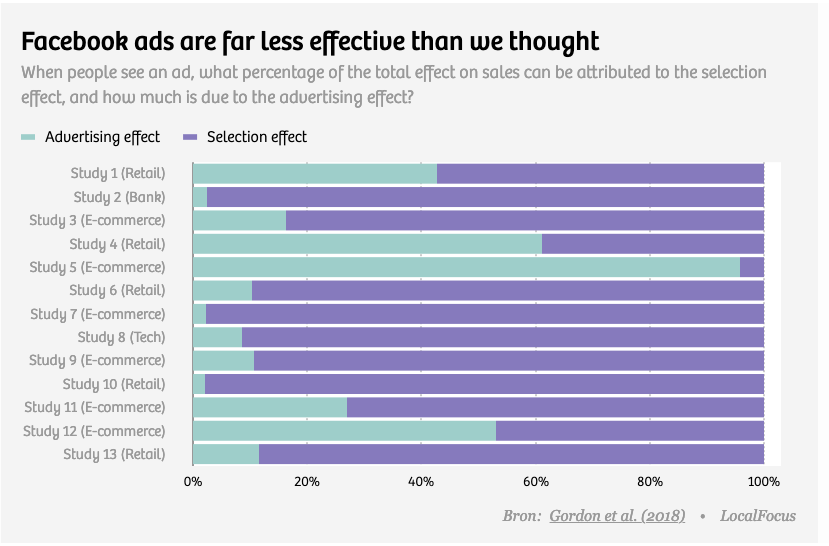

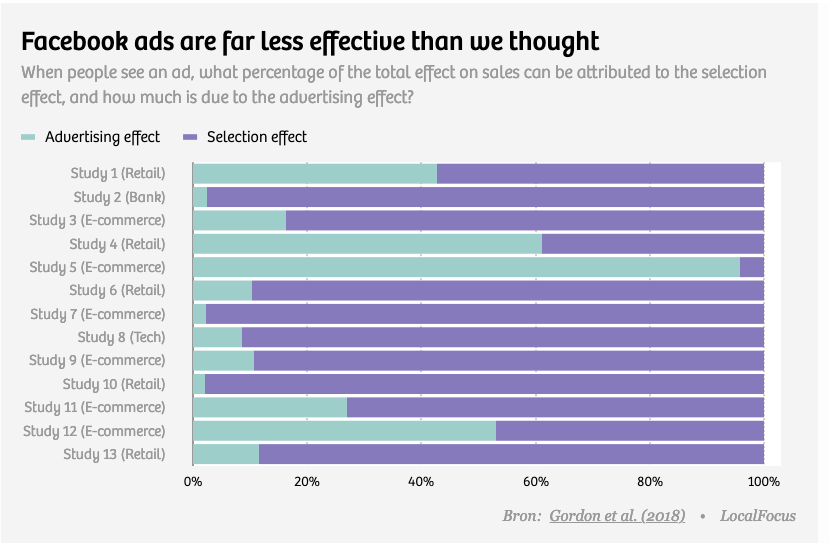

Advertising effect or selection effect? KPI make statements 2 types of "effect

On the one hand, there is the desired advertising effect. Advertising generates sales that would not have taken place without advertising. The causality is clear: I sell because I advertised.

On the other hand, there is the selection effect. The advertising reaches users who would have bought anyway. They generate sales that are attributed to the advertising as performance via click KPIs, even though it has done nothing at all. Example Google: You place Adwords on your own brand name, people click on the paid link, but would also have clicked on the organic link if it had been in first place. The selection effect means: I pay for contacts / customers that I already have anyway.

Here is an overview of the proportions of "real" advertising impact by sector

Conclusion: "Is online advertising working? We simply don't know". If you want to know, don't sink any advertising money into the targeting godfathers of Google and Facebook, and instead put it into a (well-controlled) experiment. digitalexcellence digitalblindness

Best always test - the research and its results ( here as pdf: Gordon, Zettelmeyer et al. 2018 ).

- There is a significant discrepancy between the results of the experiments (A/B tests) and the "results" observed at the same time via "KPI" from Facebook.

- The "effect" of Facebook Ads is "overestimated" by FB's own KPI in 50% of the cases up to 3-fold. A method behind this is not (yet) recognizable.

- Discovered, in contrast to previous research, were also underestimates of advertising impact.

- Reliable "modeling" is unlikely. In the thought experiment, various explanatory models were tested with theoretical influencing factors. In more than half of the experiments, (unknown) databases and (unknown) explanatory variables should have been as "strong" (valid) as the top variables in the real experiment.

- A "correct" measurement will likewise not lead to reliable forecasts of advertising impact.